U.S. Military Insect Drones (powered by LabVIEW?) July 15, 2011

Posted by emiliekopp in industry robot spotlight, labview robot projects.Tags: drone, insect, labview, mav, micro_aerial_vehicle, military

2 comments

Do the screens of any of the monitors look familiar?

Read the news coverage of this robots here:

Micro-machines are go: The U.S. military drones that are so small they even look like insects

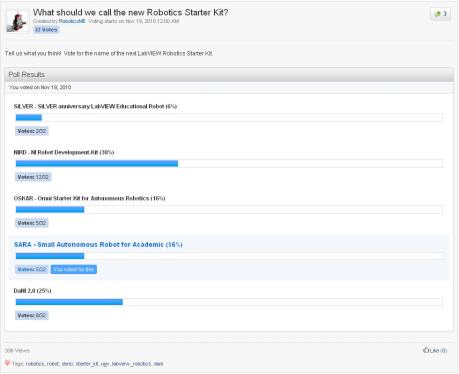

Last chance to vote: Help name the new NI robotics product November 23, 2010

Posted by emiliekopp in industry robot spotlight.Tags: poll, robotics, starter_kit

add a comment

Rumor is the NI R&D team is developing a new robotics starter kit. I’ve seen some early prototypes and while I can’t provide any specs (without losing my job, of course), I can divulge that the team is looking for outside help. Specifically, they need outside opinion on what to name the new robot. What do you think it should be?

Visit the NI Robotics Code Exchange to cast your vote.

Hurry because the poll ends soon.

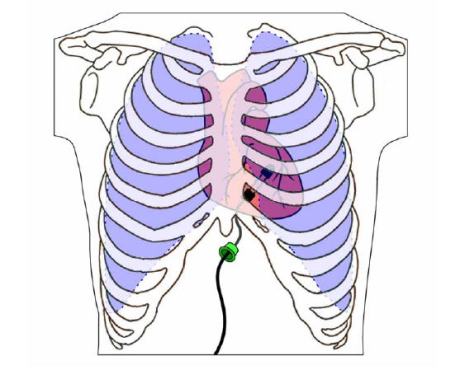

HeartLander: A Miniature Mobile Robot That Crawls Around the Heart, Powered by LabVIEW November 16, 2010

Posted by emiliekopp in industry robot spotlight, labview robot projects.Tags: carnegie melon, heartlander, labview, michio kaku, robotics institute, surgery

4 comments

Recall from the NIWeek 2010 Day Three Keynote: Dr. Michio Kaku, theoretical physicist, predicted the next 20 years of technology development, describing tiny robots that would travel throughout the body, taking readings, administering medication and performing tiny microscopic procedures, all while we go about our daily routines.

Researchers at the Carnegie Melon Robotics Institute are one step ahead of us, delivering the HeartLander, a miniature robotic device that can crawl around the surface of the heart, taking measurements and performing simple surgical tasks, all while the heart continues to pump blood throughout the body. I stumbled upon Nicholas Patronik’s Ph.D. thesis describing this project (and I encourage everyone to check it out). Here is what I found out:

Robot-assisted surgery

NI technologies have been used for robot-assisted surgeries before, but the HeartLander robot addresses two major challenges of cardiac therapy and surgery:

• Gaining access to the heart without opening the chest

• Operating on the heart while it is beating

Several options for tackling these challenges exist today. Thoracoscopic techniques use laparoscopic tools inserted through the chest cavity to operate on the beating heart (think DaVinci Robot). While this avoids cracking open the rib cage (ouch!), even less invasive methods exist for accessing the heart and performing simpler procedures, like dye injections. Percutaneous transvenous techniques access inner organs through main arteries and veins. For example, a doctor can guide a heart stent through the veins in your thigh to treat blockages, and this procedure is performed on an outpatient basis. While these transvenous procedures are easier to recover from, thoracoscopic techniques offer much more flexibility in the complexity of surgical operations that can be performed.

The HeartLander robot is considered a hybrid of these two approaches in that it can achieve fine control of thoracoscopic techniques while maintaining the ability to be performed on an outpatient basis, like the percutaneous transvenous techniques. It adheres to and traverses the heart’s surface, the epicardium, providing a tool for precise and stable interaction with the beating heart. Even better, it can access difficult to reach locations of the heart such as the posterior wall of the left ventricle (the side of your heart that faces your back).

How it works

The HeartLander is launched onto the surface of the beating heart through a small puncture underneath the bottom of the sternum. From there, the robot steadily traverses the epicardium like an inchworm. Offboard linear motors actuate the robot forward while solenoids regulate vacuum pressure to suction pads. Watch a video of early prototypes inching across an inflated balloon, a synthetic beating heart and a porcine beating heart to see HeartLander’s motion in action (warning: the video clips get progressively graphic in nature).

An umbilical tether sends and receives information between the HeartLander and the control station, where the pressure to the suction pads is monitored and controlled to maintain grip at all times. The mobility of the robot is semiautonomous: it uses a pure pursuit tracking algorithm to navigate to predetermined surface targets, and can also be controlled via teleoperation.

Two drive wires transmit the actuation from off-board motors for locomotion. A 6-DOF electromagnetic tracking sensor is mounted to the front of the body.

It can navigate to any location on the epicardium, with clock speeds up to 4 mm per second, and acquire localization targets within 1 mm. But what I think is particularly exciting about this application is that the robot’s motion is controlled entirely with LabVIEW software and NI data acquisition hardware.

So far, the HeartLander has been successfully demonstrated through a series of closed-chest, beating-heart porcine studies. We don’t have tiny robotic heart worms crawling around in us just yet. But we’re certainly excited to see how the HeartLander project progresses and we’re proud that NI technologies are helping pave the way for incredible, futuristic innovations like this.

Learn more about the HeartLander project on CMU’s website

Discover other autonomous robots designed and controlled using LabVIEW software

Georgia Tech Researchers Teach Robot to Lie September 13, 2010

Posted by emiliekopp in industry robot spotlight.Tags: ai, artificial intelligence, georgia tech, gt, robot apocolypse, robot uprising, skynet

1 comment so far

Georgia Tech Regents professor Ronald Arkin (left) and research engineer Alan Wagner conducted simulations and experiments as part of what is believed to be the first detailed examination of robot deception.

Is this the first step towards a Skynet-like robot takeover? You decide. Read the full article on the Georgia Institute of Technology Research News blog.

(I, for one, welcome our robot overlords)

Snake-like robot developed by the Army – powered by LabVIEW July 28, 2010

Posted by emiliekopp in industry robot spotlight.Tags: army, labview, military, robot, snake, snake-robotics

2 comments

Army technology expands snake-robotics

The story highlights a snake-robot developed by the U.S. Army Research Laboratory. The robo-snake is biomemetic, meaning it maneuvers just like a real snake would, pushing off ground surfaces to propel itself. It can crawl, swim, climb or shimmy through narrow spaces while transmitting images to the Soldier operator. And it’s scalable, such that it can be built a robo-snake however large or small they’d like it to be. It’s expected to help with search-and-rescue and reconnaissance missions.

I’m sure you recognized the software on the command laptop’s screen too. Yep, that’s LabVIEW. Developers are using the graphical programming language to quickly and cost-effectively interface to the robot’s sensors and control it’s actuators. They can rapidly build and test early prototypes and ultimately deliver this dexterous robot to the field more quickly, saving lives and taxpayer dollars.

Vecna BEAR Military UGV: A Jack of All Trades July 14, 2010

Posted by emiliekopp in industry robot spotlight.Tags: bear, crio, labview, military, robot, ugv, vecna

add a comment

I’ve written about Vecna Robotics’ Battlefield Extraction-Assist Robot (BEAR) before and am familiar with its development process. Its design engineers used LabVIEW and NI CompactRIO to rapidly build and test early prototypes and win defense contracts.

BotJunkie recently featured a video that captures the Vecna BEAR in action. Admittedly, one can see that the actual “extraction” of military casualties still looks a bit awkward and probably needs more work. I’m sure operating a robot with so many degrees of freedom in a potentially hostile environment is extrememly difficult and requires an enormous amount of practice. Bottom line, this is definitely one of the more friendly military robots that is helping save lives.

But once you take handling an injured human out of the equation, the robot can actually serve several other purposes that may not require as much poise. For instance, the BEAR can help with more logistical tasks, like handling munitions and delivering supplies. It’s payload capacity is a whopping 500 lbs, so it could definitely help as an extra hand on the battlefield. And because of it’s dexterity, it could perform maintenance functions as well, such as inspection, decontamination and refueling. Saving time and effort allows troops to focus on the task at hand, which indirectly reduces the risk soldiers are exposed to.

So the BEAR is certainly a robotic jack-of-all-trades that could prove extremely useful when fully deployed. It’s fun to imagine full convoys of these surprisingly cute robots in the future (by the way, the video explains the cuteness factor).

Blind Driver Challenge – Next-Gen Vehicle to Appear at Daytona July 2, 2010

Posted by emiliekopp in industry robot spotlight, labview robot projects.Tags: bdc, blind driver car, daytona, nfb, romela, ugv, virginia tech

3 comments

The National Federation of the Blind (NFB) has just announced some ambitious plans inspired by RoMeLa. According the their recent press release, they have partnered with Virginia Tech to demonstrate the first street-legal vehicle that can be driven by the blind.

We saw RoMeLa’s work awhile back and featured the technology used in their first prototype. The NFB was immediately convinced about the viability RoMeLa’s prototype demonstrated and put them to work on a street-legal vehicle with some grant money. The next-generation vehicle will incorporate their non-visual interfaces with a Ford Escape Hybrid, reusing many of the technologies they used in their prototype. The drive-by-wire system will be semi-autonomous and use auditory and haptic cues to provide obstacle detection for a blind driver.

The first public demo will be at the Daytona International Raceway during the Rolex 24 racing event. News coverage is popping up all over the place.

If you can’t make it to Daytona, RoMeLa will be showing off their first prototype (red dune buggy) at NIWeek in August, in case anyone wants to take it for a spin.

Up Close and Personal with the Predator UAV June 23, 2010

Posted by emiliekopp in industry robot spotlight.Tags: border_patrol, drone, predator, UAV, video

1 comment so far

I’ve said several times over that military robots often get a bad rep, when in fact, they are helping save time, effort and ultimately, lives.

Consider Unmanned Aerial Vehicles (UAVs): these robots can serve as the military eyes and ears in the sky, helping provide strategic surveillance information about identified and possible threats. They can fly undetected at extreme heights and see things humans cannot, using a variety of sensors like thermal cameras to detect things unseen to the naked eye.

These drones aren’t just spying on enemy territory. They can help protect U.S. borders as well. The Predator drone, for instance, is now being commissioned by the U.S. Customs Border Patrol to provide remote surveillance of the Texas border.

This video provides an excellent description of how these UAVs actually work. I was most impressed with the amount of redundancy these remotely-operated vehicles must have. Take a look and learn:

A recent news update reports that the border drone flights have been temporarily suspended due to a communications fault experienced during a recent test flight. It just goes to show how extremely cautious we must be when sending out unmanned vehicles to wander around in our atmosphere.

More information about robotic border patrol here.

See how NI technologies are also used on-board unmanned aerial vehicles like the Global Hawk.

ROVs: The paramedics of the Gulf June 2, 2010

Posted by emiliekopp in industry robot spotlight.Tags: bp, crisis, deepwater horizon, fleet, gulf, lmrp, oceaneering, oil, pilot, robot, rov, uuv

add a comment

We might as well be drilling on Mars. Ever since offshore drilling in the Gulf had been approved by government, oil companies have reached record depths of operation under the ocean surface. How is this possible? Robots, of course. More specifically, Remotely Operated Vehicles (ROVs).

These unmanned systems are able to reach depths that are impossible to reach as humans. Since we are limited to atmospheric diving, our bodies have never ventured to depths beyond ~2,500 fsw (feet of salt water). And anyone crazy enough to make it even that far must be crammed inside a diving container like Oceaneering’s WASP.

I once had the opportunity to check one of these suits out in person when I interned at Oceaneering. Once the diving cap had been closed, I felt like I had been stuffed in a refrigerator with 2 cm of wiggle room. I cannot even fathom what it might be like to dangle in that sardine can thousands of feet underwater. Keep in mind, 80% of the Gulf’s oil, and 45% of its natural gas comes from operations in more than 1000 feet of water – classified as “deepwater.”

I once had the opportunity to check one of these suits out in person when I interned at Oceaneering. Once the diving cap had been closed, I felt like I had been stuffed in a refrigerator with 2 cm of wiggle room. I cannot even fathom what it might be like to dangle in that sardine can thousands of feet underwater. Keep in mind, 80% of the Gulf’s oil, and 45% of its natural gas comes from operations in more than 1000 feet of water – classified as “deepwater.”

ROVs, on the other hand, can withstand the pressure depth and do not require atmospheric diving conditions. These tethered robots act as our eyes, ears and hands under the sea. ROV pilots sit at mission control consoles that would make you think you’re in a video game. Pilots rely on several video feeds, joysticks, knobs, buttons and more to steer and maneuver the robot and operate its manipulators. Completing seemingly simple tasks, like turning a crank on a wellhead, is extremely tricky and requires hundreds of hours of experience and practice to master.

Fleets of ROVs have roamed the Gulf, monitoring oil well conditions, dredging sea floors to lay pipeline, and more recently, repairing/cleaning up when things go wrong.

The Gulf Oil Crisis

According to wsj.com BP now plans to try to contain the flow of oil from the leak with a lower marine riser package (LMRP), or cap. The operation involves a lot of cutting and removing of broken, tangled drilling pipe, called riser. ROV’s equipped with diamond saws and gargantuan cutting shears must trim the gnarled remains of riser that sits atop the blowout preventer. By making a clean cut, BP can make another attempt at capping the valve and siphoning the spewing oil to the surface.

However, BP officials say “there is no certainty” that the operation will work, considering a task like this has never been carried out in 5,000 feet of water. Now I’m beginning to appreciate why this entire project has the name “Deepwater Horizon.”

Teams of ROVs and pilots are now working around the clock to begin Phase 1 of the LMRP containment system. Can robots save the day? Will ROVs be our paramedics of the sea? I sure hope so. I suppose time will tell.

View a live video feed from one of the ROVs helping cap the well in the Gulf Oil Crisis

To learn more about ROV design, simulation and pilot training, you can read these technical case studies on ni.com:

Microsoft ups the ante in the robotics market, makes MSRDS free May 20, 2010

Posted by emiliekopp in industry robot spotlight.Tags: automaton, labview, microsoft, msrds, robotics, ros, simulation, willow garage

5 comments

An exciting post on the IEEE Spectrum Automaton Blog from Erico Guizzo, fellow robotics blogger: Microsoft Shifts Robotics Strategy, Makes Robotics Studio Available Free

New MSRDS simluation environment (photo from Automaton Blog)

It seems Microsoft is taking a similar approach to Willow Garage and is now offering its development tools for free, in efforts to gain widespread adoption in the robotics market.

I, for one, am excited to see this play out and welcome Microsoft’s increased investment in the robotics market. I’ve seen first hand that MSRDS plus LabVIEW can be a powerful combination of simulation and graphical programming tools.

But keep in mind, lowering the boundary to robotics not only means making your development software available to the masses with free/low-cost, hobbyist options. It also means making sure that technology is put in the hands of tomorrow’s engineers and scientists and leveraging other key industry players like LEGO and FIRST to reach students.

And it means making your tools open to other design platforms, so the roboticist is free to leverage any and every development tool that makes sense. Disruptive technologies like multicore processors, FPGAs and increasingly sophisticated commercial sensors are providing an exciting landscape for robotics developers out there. They need intuitive design tools to piece everything together. Hopefully Willow Garage (ROS), Microsoft (MSRDS) and NI (LabVIEW) will continue to find ways to optimize this.

What do you think?